Teradata/dbms/sql

Data Modeling Techniques

There are 3 major modeling approaches:

1️⃣ ER Model (Entity Relationship Model)

The Entity Relationship (ER) Model is a conceptual way of designing databases using:

Entities

Attributes

Relationships

Created by: Peter Chen

Traditional OLTP systems

Used in normalized databases

Used in:

Banking systems

Transactional systems

2️⃣ Dimensional Modeling

Dimensional Modeling is a data design technique used in Data Warehousing to support:

Reporting

BI tools

Analytics

Fast query performance

It was popularized by

Ralph Kimball.

👉 It is optimized for read performance, not transactions.

Created by: Ralph Kimball

Used in:

Data Warehousing

BI systems

Reporting

Used in:

Enterprise Data Warehouse

Power BI

Tableau

Snowflake / Teradata DW

⭐ Two main types:

🔹 Star Schema

A Star Schema is a dimensional model where:

One central Fact table

Multiple Denormalized Dimension tables

Structure looks like a ⭐ (star)

📌 Characteristics

✔ Dimensions are denormalized

✔ Fewer joins

✔ High query performance

✔ Easy to understand

✔ Best for BI tools

🧱 Structure

Example: Loan Analytics

🧠 Example

Fact_Loan

Loan_Key

Customer_Key

Channel_Key

Date_Key

Loan_Amount

Dim_Customer

Customer_Key

Name

City

Segment

All customer info in one table.

✔ Fast queries

✔ Easy reporting

✔ Best for Power BI / Tableau

🎯 Advantages

Fast aggregation queries

Simple design

Easy reporting

Better performance

❌ Disadvantages

Data redundancy in dimensions

Larger storage

🔹Snowflake Schema

A Snowflake Schema is a dimensional model where:

Fact table in center

Dimension tables are normalized

Looks like a snowflake ❄

Normalized dimensions

More joins

Less redundancy

📌 Characteristics

✔ Dimensions are normalized

✔ More joins

✔ Less redundancy

✔ Slightly complex

🎯 Advantages

Reduced storage

Better data consistency

Less duplication

❌ Disadvantages

More joins → Slower queries

Complex for BI tools

Harder to maintain

Data Vault is a data modeling methodology used in data warehousing to provide flexible, scalable, and audit-compliant solutions for storing historical enterprise data.

Data Vault is a data warehouse modeling technique designed for:

Scalability

Auditability

Historical tracking

Agile development

It was created by

Dan Linstedt.

👉 It solves limitations of traditional dimensional modeling in large enterprise systems.

✅ Why use Data Vault?

It handles rapidly changing data with traceability.

Great for agile development, big data, and real-time analytics.

Makes data warehouses more adaptable to business and structural changes.

Emphasizes auditing, historical tracking, and parallel loading.

Used in:

Enterprise Data Warehouse

Large scalable systems

Audit tracking

Agile data warehouse

🎯 Why Data Vault?

Traditional Star Schema problems:

Hard to adapt to new sources

Difficult schema changes

Limited audit tracking

Complex reprocessing

Data Vault solves this by:

✔ Separating business keys

✔ Storing history automatically

✔ Making it source-system friendly

✔ Supporting parallel loading

1️⃣ Hub

Stores:

Business Key (natural key)

Load Date

Record Source

Hash Key (usually)

Example:

Hub_Customer

Customer_HK (Hash Key)

Customer_ID (Business Key)

Load_Date

Record_Source

✔ One hub per business entity

✔ No descriptive attributes

2️⃣ Link

Stores:

Relationship between hubs

Example:

Link_Loan_Customer

Loan_HK

Customer_HK

Load_Date

Record_Source

✔ Handles M:M relationships

✔ Only keys, no descriptions

3️⃣ Satellite

Stores:

Descriptive attributes

Historical changes

Effective dates

Example:

Sat_Customer_Details

Customer_HK

Name

Address

Phone

Load_Date

End_Date

✔ Keeps full history

✔ Insert-only model

👉 Data Vault is usually raw layer

👉 Star schema is presentation layer

🔥 Why Enterprises Prefer Data Vault

✔ Multiple source integration

✔ Regulatory compliance

✔ Audit requirement

✔ Banking / Finance systems

✔ Large-scale DW

✅ Comparison (Very Important)

| Model | Used In | Pros | Cons |

|---|---|---|---|

| ER | OLTP | Highly normalized | Complex joins |

| Star | BI / Reporting | Fast queries | Data redundancy |

| Snowflake | BI | Less redundancy | More joins |

| Data Vault | Enterprise DW | Scalable & Auditable | Complex design |

4️⃣ Multi Active Satellites

for example, a customer has multiple active phone numbers or addresses concurrently.

5️⃣ Point-in-Time (PIT) Tables

Point In Time (PIT) tables in Data Vault modeling are specialized helper tables designed to simplify and optimize querying historical data that comes from multiple satellites related to a single hub or link. They are commonly used in Data Vault 2.0 implementations to improve query performance and reduce complexity.

🧠 Purpose of PIT Tables

In a Data Vault model:

Hubs and satellites are normalized (efficient for storage and history).

But querying them (especially time-based joins) is complex and slow.

PIT tables provide a denormalized snapshot of satellite records for a specific time point.

🔁 This avoids repeated complex joins every time someone wants to get the data “as of” a specific date.

🧱 Structure of a PIT Table

A PIT table usually includes:

Business key hash (Hub Hash Key)

Load dates from multiple satellites

Optionally, a snapshot date (cutoff or PIT timestamp)

========================================================================

Entity-Relationship

ER Model is a conceptual framework used for designing and representing the logical structure of a database. It visually outlines entities, their attributes, and the relationships among them.

Entity in DBMS

An Entity is a real-world object, concept, or thing about which data is stored in a database.

In relational databases, entities are represented as tables, and individual entities are represented as rows.

Examples of Entities:

Objects:

Employee,Car,StudentConcepts:

Course,Event,ReservationThings:

Product,Document,Device

Entity Set

An Entity Set is a collection of similar types of entities (i.e., all rows in a table).

For example, all students in a university form the Student entity set.

⚠️ ER Diagrams represent entity sets, not individual entities.

What are Attributes?

Attributes are properties or characteristics of an entity that describe its details.

📌 Example: For a

Studententity, attributes includeRoll_No,Name,DOB,Address, etc.

In ER diagrams, attributes are represented by ovals.

Relationship Type and Relationship Set

A Relationship Type represents the association between entity types.

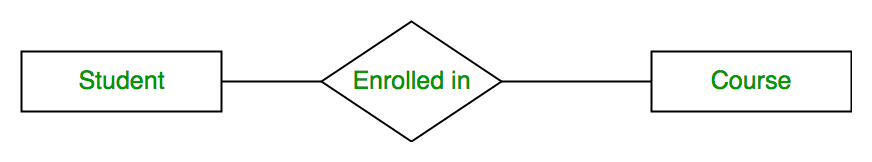

For example, ‘Enrolled in’ is a relationship type that exists between entity type Student and Course. In ER diagram, the relationship type is represented by a diamond and connecting the entities with lines.

A set of relationships of the same type is known as a relationship set. The following relationship set depicts S1 as enrolled in C2, S2 as enrolled in C1, and S3 as registered in C3.

📌 Definition

The degree of a relationship is the number of entity sets participating in that relationship.

1️⃣ Unary Relationship (Degree = 1)

-

Only one entity is involved

-

Also called recursive relationship

Example:

-

Employee manages Employee

👉 One entity related to itself

2️⃣ Binary Relationship (Degree = 2)

-

Two entities are involved (most common)

Example:

-

Customer places Order

3️⃣ Ternary Relationship (Degree = 3)

-

Three entities are involved

Example:

-

Supplier supplies Product to Store

4️⃣ N-ary Relationship (Degree = n)

-

More than 3 entities

✅ ACID Properties in Databases

ACID properties are fundamental principles that ensure reliability, consistency, and integrity in database transactions.

Every relational database, including Teradata, adheres to these principles for transaction management.

🔹 What is a Transaction?

A transaction is a unit of work performed in a database. It can consist of one or more SQL statements (INSERT, UPDATE, DELETE).

It ensures data integrity, especially in concurrent and failure-prone environments.

🧾 Example: Transferring ₹100 from Account A to B:

Check balance

Deduct from A

Add to B

All must succeed, or none should apply.

🔹 ACID Properties

| Property | Description | Example |

|---|---|---|

| A – Atomicity | “All or nothing” – a transaction either completes fully or rolls back | If a money transfer fails after debiting account A but before crediting B, the debit is undone |

| C – Consistency | Ensures database rules and constraints are always met Database remains in a valid state before and after | If a table requires salary > 0, an update setting salary = -100 is rejected |

| I – Isolation | Concurrent transactions do not interfere; results appear as if transactions executed serially | Two users updating the same row: isolation ensures correct final value |

| D – Durability | Once a transaction is committed, changes are permanent, even after a crash | After committing a purchase, the order remains recorded in the database |

🔹 ACID in Teradata

-

Teradata supports ACID transactions at the statement level and multi-statement level

-

Fallback and journaling help maintain durability and fault tolerance

-

LOCKING mechanisms maintain isolation

🔹 Why ACID is Important

-

Ensures data integrity

-

Prevents data corruption during failures

-

Supports concurrent access safely

-

Critical for financial, healthcare, and critical systems

📈 Advantages of ACID

Ensures Data Consistency and Integrity

Concurrency control via isolation

Enables Recovery after failures

⚠️ Disadvantages

Performance overhead

Complexity in distributed systems

May limit scalability under heavy load

🌍 Where ACID is Critical

Banking: Funds transfer, balance updates

E-commerce: Inventory and order consistency

Healthcare: Patient record accuracy

Enterprise ERP/CRM: Complex transactional workflows

=====================================================================

What is Data Quality?

Data Quality (DQ) refers to the accuracy, completeness, consistency, and reliability of data in a database or data warehouse.

High-quality data ensures trustworthy analytics, reporting, and decision-making.

Poor data quality can lead to wrong business decisions, inefficiencies, and increased costs.

In simple terms:

👉 “Is your data good enough to trust and use for decisions?”

ACCT UVI

🔹 Common Data Quality Issues

-

Duplicate records – Same customer entered multiple times

-

Missing values – Important columns are NULL or empty

-

Incorrect data – Wrong numeric values, typos in text fields

-

Inconsistent formats – Dates in multiple formats (DD/MM/YYYY vs MM-DD-YYYY)

-

Orphan records – Foreign key refers to a non-existent primary key

-

Data drift – Data becomes outdated over time

🔹 Example – Checking Missing Values

SELECT emp_id, name

FROM employee

WHERE phone IS NULL;

🔹 Example – Removing Duplicate Records

DELETE FROM employee

WHERE emp_id NOT IN (

SELECT MIN(emp_id)

FROM employee

GROUP BY name, phone

);

-

Keeps only one record per unique combination of

nameandphone

=====================================================================

✅ Subquery in SQL

A subquery (also called an inner query or nested query) is a query inside another SQL query. It is used to return data that will be used by the main (outer) query.

Subqueries help break down complex problems, filter data dynamically, and perform calculations or lookups within a query.

🔹 Types of Subqueries

1️⃣ Non-Correlated Subquery

SELECT emp_id, name

FROM employee

WHERE dept_id = (SELECT dept_id

FROM department

WHERE dept_name = 'Finance');

-

Inner query runs once

-

Returns a value for outer query to filter

2️⃣ Correlated Subquery

SELECT e1.emp_id, e1.name, e1.salary

FROM employee e1

WHERE e1.salary > (SELECT AVG(e2.salary)

FROM employee e2

WHERE e1.dept_id = e2.dept_id);

-

Inner query depends on each row of outer query

-

Calculates average salary per department dynamically

3️⃣ Subquery in SELECT

SELECT emp_id, name,

(SELECT dept_name

FROM department

WHERE department.dept_id = employee.dept_id) AS dept_name

FROM employee;

-

Returns department name inline for each employee

4️⃣ Subquery in FROM (Derived Table)

SELECT dept_id, AVG(salary) AS avg_salary

FROM (SELECT dept_id, salary FROM employee) AS emp_sub

GROUP BY dept_id;

-

Treats subquery as a temporary table

🔹 Advantages of Subqueries

✔ Makes queries modular and readable

✔ Can replace joins in some scenarios

✔ Useful for dynamic filtering and calculations

✔ Supports nested aggregations

=====================================================================

Data Warehouse

A Data Warehouse (DW) is a centralized REPOSITORY used to store large volumes of historical, structured data from multiple sources for reporting and analytics.

👉 It is mainly used for business intelligence (BI) and decision-making.

👉 It is optimized for OLAP (Online Analytical Processing).

🔹 Popular Data Warehouse Platforms

=====================================================================

Data Mart

A Data Mart is a subset of a Data Warehouse designed for a specific department or business function (like Sales, Finance, HR).

👉 It contains focused, subject-specific data.

👉 Used for faster reporting for a particular team.

🔹 Example

If a company has a Data Warehouse containing all data:

-

Sales team needs only sales data

-

Finance team needs only revenue & expense data

👉 Separate Data Marts are created for each team.

🔹 Types of Data Mart

📌 1️⃣ Dependent Data Mart

📌 2️⃣ Independent Data Mart

📌 3️⃣ Hybrid Data Mart

👉 Balanced approach

🔹 Quick Comparison Table

| Feature | Dependent | Independent | Hybrid |

|---|---|---|---|

| Source | Data Warehouse | Operational systems | Both |

| Data Consistency | High | Medium | High |

| Implementation Speed | Medium | Fast | Medium |

| Best For | Large organizations | Small teams | Mixed requirements |

A database is an organized collection of data stored electronically so it can be easily accessed, managed, and updated.

🔹 1. Relational Database (RDBMS)

✔ Table-based

✔ Uses SQL

✔ Strong consistency

✔ Good for structured data

Example: Teradata, Oracle

🔹 2. NoSQL Database

✔ Non-relational

✔ Flexible schema

✔ Used for big data & real-time apps

Types of NoSQL:

| Type | Example |

|---|---|

| Document | MongoDB |

| Key-Value | Redis |

| Column-family | Apache Cassandra |

| Graph | Neo4j |

🔹 3. Hierarchical Database

-

Data stored in tree structure (Parent → Child)

-

Example: IBM Information Management System

🔹 4. Network Database

-

Many-to-many relationships

-

More flexible than hierarchical

🔹 5. Object-Oriented Database

-

Stores data as objects (like OOP concepts)

-

Supports classes, inheritance

🔹 6. Distributed Database

-

Data stored across multiple locations

-

Used in large enterprise systems

✅ What is a Data Model?

A Data Model is the structured design of how data is stored, organized, and related inside a database or data warehouse.

👉 Simple meaning:

Blueprint of database structure

✅ Types of Data Models (High Level)

There are 3 levels of data modeling:

| Type | Purpose | Used By |

|---|---|---|

| 1️⃣ Conceptual | High-level business view | Business stakeholders |

| 2️⃣ Logical | Detailed structure without DB specifics | Data architects |

| 3️⃣ Physical | Actual implementation in DB | Developers / DBAs |

1️⃣ Conceptual Data Model

A Conceptual Data Model (CDM) is the highest-level representation of data in a system.

It focuses on:

-

Business entities

-

Relationships between entities

-

Business rules

❌ No columns

❌ No data types

❌ No primary/foreign keys

❌ No technical details

📌 What Does It Contain?

Only 3 things:

1️⃣ Entities (High-level objects)

2️⃣ Relationships

3️⃣ High-level business rules

🧠 Example – Banking System

Conceptual view:

-

Customer

-

Account

-

Transaction

-

Branch

Relationships:

-

Customer owns Account

-

Account has Transactions

-

Account belongs to Branch

That’s it.

No columns like:

-

customer_id

-

account_number

-

transaction_date

Those come later in logical model.

Customer ---- owns ---- Account

Account ---- has ---- Transaction

Branch ---- manages ---- Account

2️⃣ Logical Data Model

A Logical Data Model defines:

-

Entities

-

Attributes (columns)

-

Primary Keys

-

Foreign Keys

-

Relationships

-

Normalization rules

Detailed structure

Entities, attributes, primary keys

No DB-specific datatype

BUT ❌ it does NOT include:

-

DB-specific datatypes (VARCHAR(50), INT, etc.)

-

Index type (PI, NUPI in Teradata)

-

Partitioning

-

Storage details

👉 It answers:

“How is the data structured logically?”

🧠 Example – Loan Origination Domain (Relevant to You)

>>Conceptual View

Since you worked on loan origination Data Vault:

Conceptual entities could be:

-

Customer

-

Loan

-

Channel

-

Application

-

Payment

Relationships:

-

Customer applies for Loan

-

Loan submitted via Channel

-

Loan has Payments

At this stage, we do NOT decide:

-

Hub or Satellite

-

Fact or Dimension

-

Data types

-

Surrogate keys

That comes in logical/physical phase.

Customer → applies → Loan

Loan → submitted via → Channel

>>Logical Data Model

Customer

-

Customer_ID (PK)

-

Customer_Name

-

Date_of_Birth

-

PAN_Number

Loan

-

Loan_ID (PK)

-

Customer_ID (FK)

-

Channel_ID (FK)

-

Loan_Amount

-

Loan_Status

Channel

-

Channel_ID (PK)

-

Channel_Name

-

Channel_Type

Notice:

✔ We added attributes

✔ Defined PK and FK

✔ Defined relationships

❌ No VARCHAR(50)

❌ No Primary Index (Teradata)

🔄 Relationship Example

1 Customer → Many Loans

So:

Customer (1)

Loan (Many)

Customer_ID becomes FK in Loan.

🔎 Normalization Happens Here

Logical modeling usually applies:

-

1NF – No repeating groups

-

2NF – Remove partial dependency

-

3NF – Remove transitive dependency

This is why logical models are usually highly normalized.

3️⃣ Physical Data Model

A Physical Data Model defines how data is actually implemented inside a specific database system.

It includes:

-

Tables

-

Columns

-

Data types

-

Primary key / Foreign key constraints

-

Indexes

-

Partitioning

-

Storage details

-

Performance optimization

👉 It answers:

“How will this run efficiently in a specific database?”

-

Actual DB implementation

-

Includes:

-

Datatypes

-

Indexes

-

Partitioning

-

Constraints

-

Storage details

🧠 Example – Loan Origination (Teradata Example)

>>Logical Model

Loan

-

Loan_ID

-

Customer_ID

-

Loan_Amount

-

Loan_Status

>>Physical Model (Teradata)

CREATE MULTISET TABLE Loan (

Loan_ID BIGINT NOT NULL,

Customer_ID BIGINT NOT NULL,

Loan_Amount DECIMAL(18,2),

Loan_Status VARCHAR(20),

Load_Timestamp TIMESTAMP(6)

)

PRIMARY INDEX (Loan_ID);

Now we added:

✔ BIGINT

✔ DECIMAL(18,2)

✔ VARCHAR(20)

✔ PRIMARY INDEX

✔ DB syntax

That is physical modeling.

Feature Conceptual Data Model Logical Data Model Physical Data Model 🎯 Purpose Understand business requirements Define structured data design Implement in database ❓ Answers “What data is needed?” “How is data structured?” “How will it run efficiently?” 📊 Level Very High Level Medium Level Low Level (Detailed) 👥 Audience Business stakeholders, Product owners Data architects, Engineers DBAs, Engineers 📦 Entities ✅ Yes ✅ Yes ✅ Yes (as tables) 🔗 Relationships ✅ Yes ✅ Yes ✅ Yes (FK constraints) 🧾 Attributes (Columns) ❌ No ✅ Yes ✅ Yes 🔑 Primary Key ❌ No ✅ Yes ✅ Yes 🔗 Foreign Key ❌ No ✅ Yes ✅ Yes 📏 Data Types ❌ No ❌ No ✅ Yes ⚙️ Indexes ❌ No ❌ No ✅ Yes 📂 Partitioning ❌ No ❌ No ✅ Yes 🧠 Normalization ❌ No ✅ Yes Optional (may denormalize for performance) 💾 Storage Details ❌ No ❌ No ✅ Yes 🏗 DB-Specific ❌ No ❌ No ✅ Yes 🛠 Used In Requirement gathering System design Implementation & tuning =====================================================================

✅ What is SCD?

Slowly Changing Dimension (SCD) methods used to manage and track changes in dimension data over time.

🔹 SCD Type 0 – No Change

-

Ignore changes

-

Keep original value forever

Rarely used.

🔹 SCD Type 1 – Overwrite

✔ No history

✔ Update existing record

Old value lost ❌

Use Case:

-

Correction of spelling mistake

-

Non-historical data

🔹 SCD Type 2 – Maintain Full History ⭐ (Most Important)

✔ Keep old record

✔ Insert new record

✔ Use surrogate key

✔ Track start & end dates

Reasoning: We have dimensions that we want to update, but we don’t want to lose historical data in the process.

🔹 SCD Type 3 – Limited History

✔ Add new column

✔ Store previous value

Similar to SCD-2, we don’t remove any data.

Reasoning: We have dimensions that we want to update, but we don’t want to lose historical data in the process.

Only 1 level history.

🔹 SCD Type 4 – History Table

-

Current table stores latest

-

Separate history table stores changes

Less common.

What does this ‘history table’ contain?

- A primary key column - to allow you to join back to the current table (omitted in the below diagram for simplicity)

- The other columns from your dimension table - since you’re capturing the historical values of those columns (e.g. post_code)

- A start date column - when this entry was processed by our data pipeline

- An end date column (optional) - when this entry ended (if it hasn’t ended, you can opt for 31–12–9999, to indicate that it will never end in our lifetime)

- A version number column (optional) - 1 indicates the first version, then increments upwards by 1 with every update

Reasoning: We have dimensions that we want to update, but we don’t want to lose historical data in the process. We also want to maintain a snapshot of all the current data.

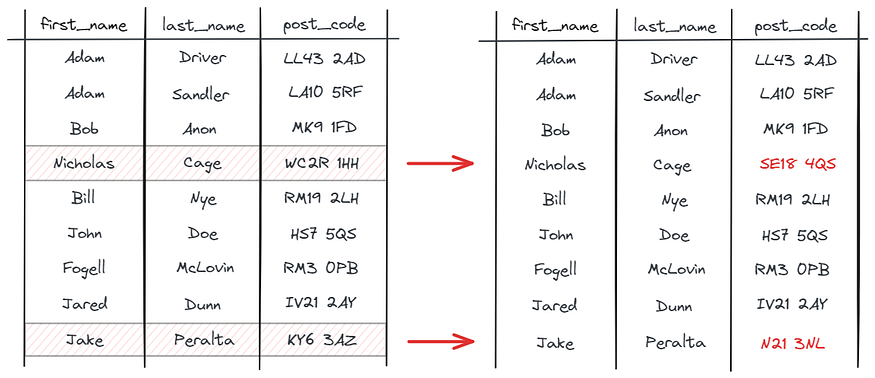

Example: Nicholas Cage and Jake Peralta have once again updated their addresses:

As mentioned in the Explanation, we update the current and historical tables accordingly:

🔹 SCD Type 6 – Hybrid SCD (1 + 2 + 3)

It combines:

-

✅ Type 1 (Overwrite)

-

✅ Type 2 (New row with history)

-

✅ Type 3 (Previous value column)

That’s why it’s sometimes called:

👉 Type 1 + Type 2 + Type 3

✔ Keep history rows (Type 2)

✔ Also keep current flag

✔ Also keep previous value column

Used in advanced DW.

Instead of choosing one, Type 6 supports all.

Relational Database Terminology

🔷 1. Tables

Core structure for storing data.

Consist of rows (records) and columns (fields).

Supports indexing, primary keys, foreign keys, etc.

Columns - A column always contains the same kind of information or contains similar data.

Row - A row is one instance of all the columns in the table.

Database - The database is a collection of logically related data. Many users access them for different purposes.

Primary Key - The primary key is used to identify a row in a table uniquely. No duplicate values are allowed in a primary key column, and they cannot accept NULL values. It is a mandatory field in a table.

Foreign Key - Foreign keys are used to build a relationship between the tables. A foreign key in a child table is defined as the primary key in the parent table.

A table can have more than one foreign key. It can accept duplicate values and also null values. Foreign keys are optional in a table.

🔷 2. Views

Virtual tables based on one or more tables or views.

Do not store data physically—just SQL definitions.

Use cases:

Restrict access to certain columns/rows.

Simplify complex joins/queries.

Standardize reporting logic.

- It does not store data; the data comes from underlying base tables.

-

Can reference single or multiple tables (joins allowed).

-

Useful for row/column restriction, pre-joined queries, and security.

1️⃣ Creating a View

CREATE VIEW Employee_View AS

SELECT Emp_Id,

First_Name,

Last_Name,

Department_No,

BirthDate

FROM Employee;

2️⃣ Using a View

-

Query it like a table:

SELECT Emp_Id,

First_Name,

Last_Name,

Department_No,

BirthDate

FROM Employee_View;

-

Joins, WHERE, GROUP BY, aggregates can be applied on views just like tables.

3️⃣ Modifying a View

-

Use REPLACE VIEW to redefine an existing view.

REPLACE VIEW Employee_View AS

SELECT Emp_Id,

First_Name,

Last_Name,

BirthDate,

JoinedDate,

Department_No

FROM Employee;

4️⃣ Dropping a View

DROP VIEW Employee_View;

5️⃣ Advantages of Views

-

Security: Restrict access to certain rows/columns.

-

Simplification: Pre-join tables for easier querying.

-

Bandwidth efficiency: Only required columns are fetched.

-

Logical abstraction: Decouples users from underlying table structure.

🔷 3. Macros

A Macro is a stored set of SQL statements that can be executed with a single command.

Can accept parameters.

Useful for repeating business logic or securing access.

Definition is stored in the Data Dictionary, but results are generated dynamically.

Example:

1️⃣ Creating a Macro

CREATE MACRO Get_Emp AS

(

SELECT Emp_Id, First_Name, Last_Name

FROM Employee

ORDER BY Emp_Id;

);

-

Macro name must be unique in the database/user.

-

Can contain multiple SQL statements.

2️⃣ Executing a Macro

EXEC Get_Emp;

3️⃣ Parameterized Macros

-

Parameters allow dynamic filtering or passing values into the Macro.

-

Use

:to reference the parameter inside the Macro.

Syntax:

CREATE MACRO Get_Emp_Salary(Emp_Id INTEGER) AS

(

SELECT Emp_Id, NetPay

FROM Salary

WHERE Emp_Id = :Emp_Id;

);

Execute Parameterized Macro:

EXEC Get_Emp_Salary(202001);

4️⃣ Replacing a Macro

-

Use

REPLACE MACROto redefine an existing Macro. -

Privileges depend on whether the Macro exists:

-

Already exists → need DROP MACRO privilege.

-

Doesn’t exist → need CREATE MACRO privilege.

-

REPLACE MACRO Get_Emp AS

(

SELECT Emp_Id, First_Name, Last_Name, Department_No

FROM Employee

ORDER BY Emp_Id;

);

5️⃣ Key Points / Advantages

-

Simplifies repeated SQL tasks.

-

Macros execute in a single transaction, ensuring consistency.

-

Parameterized Macros allow dynamic queries.

-

Only EXEC privilege is required for users.

-

Can include nested Macro execution via

EXECstatements.

🔷 4. Triggers

A set of SQL statements that automatically executes in response to data changes (INSERT, UPDATE, DELETE).

Attached to a table.

Used for enforcing rules or auditing changes.

The trigger logic is stored in the Data Dictionary and executed automatically.

Users don’t need to explicitly call a trigger—it runs when the event occurs.

1️⃣ Types of Triggers in Teradata

Triggers in Teradata can be categorized based on timing and event:

| Type | Description |

|---|---|

| BEFORE Trigger | Executes before the triggering action (INSERT, UPDATE, DELETE). |

| AFTER Trigger | Executes after the triggering action is completed. |

| INSERT Trigger | Fires when a new row is inserted into the table. |

| UPDATE Trigger | Fires when a column in a row is updated. |

| DELETE Trigger | Fires when a row is deleted from the table. |

Note: Teradata primarily supports AFTER triggers; BEFORE triggers are limited.

2️⃣ Trigger Events

A trigger can be defined for one or more of the following events:

-

INSERT – when new records are added.

-

UPDATE – when existing records are modified.

-

DELETE – when records are removed.

3️⃣ Trigger Syntax in Teradata

Components:

-

REFERENCING OLD AS / NEW AS: Allows access to old or new values of the row.

-

FOR EACH ROW: Executes trigger once per affected row.

-

FOR EACH STATEMENT: Executes trigger once per SQL statement, regardless of how many rows are affected.

4️⃣ Example 1: AFTER INSERT Trigger

Assume we have a table Employee:

| Emp_Id | Name | Department | Salary |

|---|---|---|---|

| 101 | Mike | Sales | 40000 |

| 102 | Robert | IT | 50000 |

Create an audit table:

CREATE TABLE Employee_Audit

(

Emp_Id INTEGER,

Action_Type VARCHAR(10),

Action_Date TIMESTAMP

);

Trigger to log inserts:

CREATE TRIGGER Employee_Insert_Audit

AFTER INSERT ON Employee

REFERENCING NEW AS new_row

FOR EACH ROW

BEGIN

INSERT INTO Employee_Audit (Emp_Id, Action_Type, Action_Date)

VALUES (new_row.Emp_Id, 'INSERT', CURRENT_TIMESTAMP);

END;

-

Every time a new employee is inserted, a record is automatically logged in Employee_Audit.

5️⃣ Example 2: AFTER UPDATE Trigger

Trigger to log salary updates:

CREATE TRIGGER Employee_Salary_Update

AFTER UPDATE OF Salary ON Employee

REFERENCING OLD AS old_row NEW AS new_row

FOR EACH ROW

BEGIN

INSERT INTO Employee_Audit (Emp_Id, Action_Type, Action_Date)

VALUES (new_row.Emp_Id, 'UPDATE SALARY', CURRENT_TIMESTAMP);

END;

-

Tracks salary changes for every employee.

6️⃣ Example 3: DELETE Trigger

Trigger to archive deleted records:

CREATE TRIGGER Employee_Delete_Archive

AFTER DELETE ON Employee

REFERENCING OLD AS old_row

FOR EACH ROW

BEGIN

INSERT INTO Employee_Archive (Emp_Id, Name, Department, Salary, Deleted_Date)

VALUES (old_row.Emp_Id, old_row.Name, old_row.Department, old_row.Salary, CURRENT_TIMESTAMP);

END;

-

Automatically moves deleted rows to an archive table.

🔷 5. Stored Procedures

A program written in SQL and stored on the database.

Can contain control-of-flow logic (IF, LOOP, etc.).

Can be scheduled, parameterized, or reused.

Example use case: ETL workflows, validations, auditing.

Supports error handling and transaction control.

Unlike macros, SPs can include conditional logic, loops, and multiple SQL statements with error handling.

SPs execute as a single unit and can return multiple result sets.

1️⃣ Advantages of Stored Procedures

-

Code reusability: Write once, call many times.

-

Encapsulation: Hides business logic from end users.

-

Performance: Precompiled SQL reduces parsing time.

-

Can implement complex logic like conditional updates, loops, exception handling.

-

Can reduce network traffic, as multiple SQL statements are executed in the database server.

2️⃣ Components:

-

IN – Input parameter (passed to procedure).

-

OUT – Output parameter (returned from procedure).

-

INOUT – Can be passed in and returned after modification.

-

BEGIN … END – Defines the body of the procedure.

-

Supports SQL statements (SELECT, INSERT, UPDATE, DELETE) and control flow statements (IF, CASE, WHILE, FOR, LOOP).

3️⃣ Example 1: Simple Stored Procedure

Goal: Retrieve employee salary by Employee ID.

CREATE PROCEDURE Get_Emp_Salary(IN EmpId INTEGER, OUT EmpSalary INTEGER)

BEGIN

SELECT NetPay

INTO :EmpSalary

FROM Salary

WHERE Emp_Id = :EmpId;

END;

Execution:

CALL Get_Emp_Salary(202001, ?);

-

?is used to capture the output parameter. -

Returns the NetPay of employee 202001.

4️⃣ Example 2: Stored Procedure with Conditional Logic

Goal: Give a bonus to employees based on salary.

CREATE PROCEDURE Give_Bonus(IN EmpId INTEGER)

BEGIN

DECLARE CurrentSalary INTEGER;

-- Retrieve current salary

SELECT NetPay INTO :CurrentSalary

FROM Salary

WHERE Emp_Id = :EmpId;

-- Conditional logic

IF CurrentSalary < 50000 THEN

UPDATE Salary

SET NetPay = NetPay + 5000

WHERE Emp_Id = :EmpId;

ELSE

UPDATE Salary

SET NetPay = NetPay + 2000

WHERE Emp_Id = :EmpId;

END IF;

END;

Execution:

CALL Give_Bonus(202001);

-

Adds a bonus dynamically based on salary.

5️⃣ Example 3: Stored Procedure with Loop

Goal: Increase salary of all employees in a department by 10%.

CREATE PROCEDURE Increase_Department_Salary(IN DeptNo INTEGER)

BEGIN

DECLARE EmpId INTEGER;

DECLARE done INTEGER DEFAULT 0;

DECLARE cur1 CURSOR FOR

SELECT Emp_Id FROM Employee WHERE Department_No = :DeptNo;

DECLARE CONTINUE HANDLER FOR NOT FOUND SET done = 1;

OPEN cur1;

loop1: LOOP

FETCH cur1 INTO :EmpId;

IF done = 1 THEN

LEAVE loop1;

END IF;

UPDATE Salary

SET NetPay = NetPay * 1.10

WHERE Emp_Id = :EmpId;

END LOOP loop1;

CLOSE cur1;

END;

Execution:

CALL Increase_Department_Salary(1);

-

Loops through all employees in Department 1 and increases their salary by 10%.

6️⃣ Drop a Stored Procedure

DROP PROCEDURE <procedure_name>;Key Differences

Feature Macro Stored Procedure 🎯 Purpose Execute a set of SQL statements Perform complex logic with control flow 🧠 Logic Support ❌ No procedural logic ✅ Supports IF, LOOP, WHILE 🔁 Reusability ✅ Yes ✅ Yes ⚙️ Execution Precompiled SQL Compiled procedural program 📥 Parameters ❌ Not supported ✅ Supported 📤 Return Values ❌ No ✅ Yes (via OUT params / result sets) 🔄 Control Flow ❌ No branching or loops ✅ Full control flow ⚡ Performance Faster (simple execution) Slightly slower (due to logic handling) 🛠 Complexity Simple Complex 📦 Use Case Reusable SQL queries Business logic, validations, ETL

🔷 6. Indexes

Used to optimize data access.

Types:

Primary Index (UPI/NUPI) – determines data distribution.

Secondary Index – improves access for non-primary keys.

Join Index – speeds up joins.

Hash Index – supports fast lookups (less common now).

⚠️ Impact of Index on Writes

❌ Slows down writes

Why?

- Every write = extra work

- Insert row + update index

- Update row → update index

- Delete row → remove from index

🧾 Example

Table without index:

- Insert → just add row ✅ (fast)

Table with index:

- Insert → add row + update index ❌ (slower)

🔥 Materialized View (MV)

A materialized view is a physical copy of a query result that is stored on disk and refreshed periodically or on demand.

👉 Unlike a normal view (which runs the query every time), a Materialized View stores precomputed results.

🔥 How It Works

When you create a Materialized View:

-

Query is executed

-

Result is stored physically

-

Future queries read from stored result

-

Data must be refreshed when base table changes

🔹 Example (Generic SQL)

CREATE MATERIALIZED VIEW mv_sales_summary AS

SELECT customer_id, SUM(amount) total_sales

FROM sales

GROUP BY customer_id;

Now:

SELECT * FROM mv_sales_summary;

👉 No aggregation runs again — data is already stored.

🔥 Refresh Types

1️⃣ Manual Refresh

REFRESH MATERIALIZED VIEW mv_sales_summary;

2️⃣ Automatic Refresh

-

ON COMMIT

-

Scheduled refresh

🔥 Advantages

✅ Faster reporting

✅ Reduces heavy joins

✅ Saves CPU

✅ Good for data warehouse

🔥 Disadvantages

❌ Uses storage

❌ Needs refresh management

❌ Slower DML on base tables

🔥 Materialized View in Teradata

In Teradata Vantage, there is no direct "Materialized View" keyword.

👉 Instead, Teradata uses:

-

Join Index

-

Summary Tables

-

Aggregate Join Index

These work like materialized views.

Example (Teradata style using Join Index):

CREATE JOIN INDEX mv_sales_summary AS

SELECT customer_id, SUM(amount) total_sales

FROM sales

GROUP BY customer_id

PRIMARY INDEX (customer_id);

🔥 When to Use Materialized View?

✔ Heavy aggregation queries

✔ Repeated reporting queries

✔ Large joins

✔ Data warehouse systems

Teradata Corporation built Teradata using MPP (Massively Parallel Processing) architecture.

🔹 1️⃣ Parsing Engine (PE)

The Parsing Engine is a virtual processor (vproc) that interprets SQL requests, receives input records, and passes data.

To do that, it sends the messages over the BYNET to the AMPs (Access Module Processor).

👉 PE does NOT store data.

🔹 2️⃣ BYNET

This is the message-passing layer or simply the networking layer in Teradata.

It receives the execution plan from the parsing engine and passes it to AMPs and the nodes.

After that, it gets the processed output from the AMPs and sends it back to the parsing engine.

To maintain adequate availability, the BYNET 0 and BYNET 1 two types of BYNETs are available. This ensures that a secondary BYNET is available in case of the failure of the primary BYNET.

| Feature | Explanation |

|---|---|

| High-speed network | Connects nodes |

| Message passing | Transfers data between AMPs |

| Parallel communication | Enables MPP processing |

👉 Acts like backbone network.

🔹 3️⃣ AMP (Access Module Processor)

These are the virtual processors of Teradata. They receive the execution plan and the data from the parsing engine.

The data will undergo any required conversion, filtering, aggregation, sorting, etc., and will be further sent to the corresponding disks for storage.

The AMP is a virtual processor (vproc) designed for and dedicated to managing a portion of the entire database.

The AMP receives data from the PE, formats rows, and distributes them to the disk storage units it controls. The AMP also retrieves the rows requested by the Parsing Engine.

Table records will be distributed to each AMP for data storage.

| Function | Explanation |

|---|---|

| Data Storage | Stores actual table data |

| Data Retrieval | Fetches rows |

| Index Handling | Manages primary index |

| Parallel Processing | Each AMP works independently |

👉 Data is distributed across AMPs.

🔹 4️⃣ Node

A Node is a physical server in the Teradata system.

It includes both hardware and software components that support the execution of Teradata’s parallel database processing.

| Feature | Description |

|---|---|

| Physical Server | Runs Teradata software |

| Contains | Multiple AMPs + PE |

| Scalable | Add nodes to scale system |

🔹 Parallel Database Extensions (PDE)

The Parallel Database Extensions is a software interface layer that lies between the operating system and database. It controls the virtual processors (vprocs).

🔹 Disks

Disks are disk drives associated with an AMP that store the data rows. On current systems, they are implemented using a disk array.🔄 Query Execution Flow in Teradata

Query Submission

A user or application submits an SQL query.Parsing Engine (PE)

Parser: Checks SQL syntax and semantics.

Security: Validates user access.

Optimizer: Generates the most cost-effective execution plan.

Dispatcher: Sends the execution steps to BYNET.

BYNET

Routes the steps from the PE to the appropriate AMPs.AMPs (Access Module Processors)

Execute the steps (e.g., read/write data).

Retrieve or update data from their own disks.

Send results back to the PE.

PE (again)

Assembles the rows.

Sends the final result back to the client.

=====================================================================

Index Types in Teradata

In Teradata, indexes are mainly used for data distribution and faster retrieval.

1️⃣ PI (Primary Index)

The Primary Index (PI) is the core mechanism for distributing rows across AMPs and retrieving data efficiently.

Primary Index decides:

Decides where the data is stored (which AMP).

Mandatory (every table must have one).

-

How data is distributed

👉 Every table MUST have one PI

👉 It does NOT have to be unique

✅ Key Characteristics

| Attribute | Description |

|---|---|

| Purpose | Determines how rows are distributed and accessed |

| When defined? | At CREATE TABLE time only – cannot be altered later |

| Max Columns | Can consist of 1 to 64 columns |

| Stored in | Data Dictionary (DD) |

🧠 Best Practices for Choosing a PI

Choose a highly unique column to avoid skew (UPI preferred).

Consider query access patterns (frequent filters, joins).

Try to align PI with foreign keys in child tables for AMP-local joins.

Avoid columns with few distinct values (e.g., Gender, Status) as PI → causes skew.

📈 Why PI Matters for Performance

Every row access by PI is a 1-AMP operation → fastest.

Good PI choice = even AMP workload → maximum parallelism.

Bad PI choice = skew → one AMP overworked, others underutilized.

🚫 Common Mistakes

Using low cardinality columns as PI (e.g., "India", "Yes/No").

Forgetting that PI ≠ PK in Teradata.

Choosing PI without analyzing access patterns.

🔹 2️⃣ UPI (Unique Primary Index)

A Primary Index where column values are unique.

👉 No duplicate values allowed

👉 Best performance

👉 Even data distribution

🔹 3️⃣ NUPI (Non-Unique Primary Index)

Primary Index where duplicates are allowed.

The hashing algorithm still determines the target AMP, but:

All rows with the same NUPI value go to the same AMP.

This can lead to uneven (skewed) distribution.

Example: Skew from Poor NUPI Choice

If you choose

Order_Status(values = "C", "O") as a NUPI:All rows get hashed to just 2 AMPs.

Remaining AMPs stay idle → severe performance degradation.

👉 Most commonly used

👉 Can cause data skew if not chosen properly

cust_id → skew risk.🔹 4️⃣ SI (Secondary Index)

A Secondary Index (SI) is an additional index created on a column other than the Primary Index to improve query performance.

A Secondary Index (SI) in Teradata is a physical access path to the data that is separate from the Primary Index (PI).

It is used to improve query performance when the query's search condition does not use the PI columns.

👉 It does NOT control data distribution

👉 It helps in faster data retrieval

👉 It uses extra storage

🔍 Why Use a Secondary Index?

When queries frequently use non-PI columns in WHERE conditions.

To avoid full table scans.

To reduce query response time.

To optimize joins or aggregations on non-PI columns.

Additional index created for faster search on non-PI columns.

- Optional

- Improves query performance

- Requires extra storage

- SI is optional

- Used for non-PI columns

- Improves read performance

- Increases write overhead

🔹 5️⃣ USI (Unique Secondary Index)

A Unique Secondary Index (USI) is a secondary index created on a column where duplicate values are NOT allowed.

👉 Ensures uniqueness

👉 Used for fast lookup

👉 Stored in a separate index subtable

👉 Secondary index with unique values

👉 Stored in separate subtable

👉 Very fast lookup

🔹 How USI Works Internally

-

User runs query:

SELECT * FROM customer WHERE email_id = 'rk@gmail.com'; -

Teradata hashes the USI column

-

Goes to specific AMP storing USI subtable

-

Finds RowID

-

Fetches actual row from base table

👉 Very fast because it’s unique.

🔹 6️⃣ NUSI (Non-Unique Secondary Index)

A Non-Unique Secondary Index (NUSI) is a secondary index created on a column where duplicate values are allowed.

👉 Used for filtering

👉 Improves SELECT performance

👉 Does NOT control data distribution

👉 Allows duplicates

👉 Stored on same AMP

👉 Helps filtering

🔹 How NUSI Works Internally

-

Query runs:

SELECT * FROM orders WHERE status = 'SHIPPED'; -

Teradata checks NUSI subtable

-

Finds list of RowIDs for matching rows

-

Fetches rows from base table

👉 Since duplicates exist, multiple rows are returned.

✅ What is a NoPI Table?

A NoPI (No Primary Index) Table is a table that does not have a defined Primary Index.

This was introduced in Teradata 13.0 to improve bulk load performance and simplify certain workloads.

CREATE TABLE table_x

(col_x INTEGER

,col_y CHAR(10)

,col_z DATE)

NO PRIMARY INDEX;📦 Why Use NoPI Tables?

Ideal for staging or landing tables in ETL pipelines.

Great for bulk loading large volumes of data quickly.

Often used temporarily before redistributing data into final structured tables.

🧠 Best Practices:

Use NoPI tables for staging or intermediate ETL steps.

Avoid long-term use unless you really do not need joins or indexed access.

After loading, redistribute data into base tables with a PI for performance.

=====================================================================

🚀 How Teradata Distributes Rows Across AMPs

In Teradata, row distribution is fully automatic, even, and hash-based.

The core idea is that each AMP (Access Module Processor) is responsible for a portion of each table’s data, and Teradata ensures the rows are distributed evenly using a hashing algorithm.

🔁 Hash-Based Distribution Mechanism

When a row is inserted into a table:

Teradata hashes the Primary Index column(s) value.

The resulting hash value is mapped to an AMP.

That AMP stores the row on its associated disk.

📌 Hashing is done on Primary Index (PI) — it’s how Teradata determines where to store a row.

🎯 Why Even Distribution Matters

Ensures parallelism is fully utilized.

Avoids skew (when some AMPs hold significantly more rows than others).

Prevents slowest AMP bottleneck in full table operations.

✅ Ideally:

Each table's rows are evenly distributed across all AMPs.

All AMPs perform roughly equal work on large queries.

🔄 Row Distribution Mechanism

Teradata uses a hashing algorithm on the PI column(s).

Hash result determines the AMP where the row will be stored.

Leads to parallelism across AMPs.

📥 How Data Gets Distributed Across AMPs

When a row is inserted into a Teradata table:

The Primary Index (PI) value of the row is input into a hashing algorithm.

The algorithm outputs a 32-bit Row Hash.

The high-order 16 bits are used to find a Hash Bucket Number.

The Hash Map uses that bucket number to determine which AMP will store the row.

The AMP stores the row in its associated vDisk.

Teradata Hashing Algorithm

Teradata uses a hashing algorithm to determine which AMP (Access Module Processor) stores a row.

-

Every row in Teradata is distributed to an AMP based on its Primary Index (PI) value.

-

The hashing algorithm transforms the PI into a 32-bit RowHash, which is used to identify the AMP.

1️⃣ How Hashing Works (Step-by-Step)

-

Client submits query → provides the Primary Index value of the row.

-

Parser receives the query and passes the PI value to the hashing algorithm.

-

Hashing algorithm generates a 32-bit RowHash from the PI value.

-

High-order bits of the RowHash (first 16 bits) determine the Hash Bucket.

-

Hash Map maps the Hash Bucket to a specific AMP.

-

BYNET sends the row to the identified AMP.

-

AMP uses the RowHash to locate the row on disk.

-

If multiple rows have the same RowHash, a uniqueness ID is incremented.

-

Combination of RowHash + Uniqueness ID → RowID, which uniquely identifies each row.

-

Rows on the AMP are logically stored sorted by RowID

2️⃣ Hashing Functions in Teradata

| Function | Description | Syntax |

|---|---|---|

| HASHROW | Returns 32-bit RowHash for a column or expression. | HASHROW(col1 [, col2 ...]) |

| HASHAMP | Returns the primary AMP number for a given Hash Bucket. | HASHAMP(hashbucket) |

| HASHBUCKET | Returns 16-bit Hash Bucket used with the Hash Map to locate the AMP. | HASHBUCKET(col1 [, col2 ...]) |

| HASHBAKAMP | Returns the fallback AMP number for a given Hash Bucket. |

| MP | Returns the fallback AMP number for a given Hash Bucket. | HASHBAKAMP(hashbucket) |

3️⃣ Example

Tables

CREATE SET TABLE Employee (

Employee_Id INTEGER,

Name VARCHAR(10),

Salary INTEGER

) PRIMARY INDEX(Employee_Id);

How a row is assigned:

-

Employee_Id = 1001

-

HASHROW(1001) → 32-bit RowHash =

0xABCD1234(example) -

High-order 16 bits → Bucket number = 43981

-

Hash Map → AMP #2 → row stored on AMP 2

-

RowID = RowHash + Uniqueness ID → uniquely identifies the row.

4️⃣ Hash Collisions

-

Hash Collision: Two different PI values produce the same RowHash → same Hash Bucket.

-

Teradata avoids collisions with:

-

Uniqueness ID → incremented for rows with same RowHash.

-

RowID → 64-bit combination of RowHash + Uniqueness ID → unique row identifier.

-

✅ Even if multiple rows share the same Hash Bucket, Teradata can efficiently locate the correct row using RowID.

5️⃣ Hash Map

-

Hash map is a translation table mapping Hash Buckets → AMP numbers.

-

Each hash bucket in the map is assigned to a specific AMP.

-

BYNET uses the hash map to route data to the correct AMP.

6️⃣ Visual Overview

[Client Query] → Parser → Hashing Algorithm → RowHash (32-bit)

→ High-order bits → Hash Bucket → Hash Map → AMP

→ AMP stores row with RowID (RowHash + Uniqueness ID)

=====================================================================

Partitioned Primary Index (PPI)

PPI is a Teradata feature that divides rows into partitions based on a defined column or expression, while still distributing rows across AMPs based on the Primary Index (PI).

👉 Data is distributed across AMPs (like normal PI)

👉 Within each AMP, data is divided into partitions

👉 Improves range query performance

A Partitioned Primary Index (PPI) helps avoid full table scans by physically organizing rows in partitions based on column values.

-

Within each partition, rows are ordered by RowHash (used by AMP for distribution).

-

Queries on partitioned columns access only relevant partitions, improving performance.

Key Benefits:

Improves query performance for range-based queries.

Allows partition elimination, where irrelevant partitions are excluded at query time.

Ideal for incremental loads, archiving, and deletions.

⚙️ How PPI Works:

Hashing for Distribution:

PI → Hash Value → Determines which AMP stores the row.

Partitioning for Storage Order:

Once inside an AMP, rows are grouped into partitions.

Within each partition, data is sorted by Row Hash (row ID).

Partition Elimination:

The optimizer analyzes query filters (e.g.,

WHERE order_date BETWEEN …) and skips partitions that won’t qualify.This reduces I/O and scan time dramatically.

SELECT * FROM sales WHERE oder_date= DATE '2024-05-10';

👉 Only May partition scanned

👉 Faster query

SELECT *

FROM Sales

WHERE SaleDate = DATE '2024-05-10';

👉 Optimizer scans only May partition

👉 Other partitions skipped

👉 This is called Partition Elimination

You do NOT need to mention PARTITION.

SELECT *

FROM Sales

WHERE PARTITION = 5;

👉 Fetches rows only from partition 5

⚠ Not commonly used in business queries

⚠ Mostly for debugging / admin usage

🔹 Query

SELECT *

FROM Sales

WHERE PARTITION < 6;

🔎 What it means

👉 Return rows from Partition 1 to 5

So it returns data for:

-

Jan

-

Feb

-

Mar

-

Apr

-

May

🔹 When NOT to Use PPI

❌ Small tables

❌ Queries without filter on partition column

❌ Very high insert workloads (slightly more overhead)

PARTITION BY RANGE_N(

totalorders BETWEEN *, 100, 1000 AND *,

UNKNOWN

);

🌲 Multilevel Partitioned Primary Index (MLPPI)

MLPPI allows sub-partitioning — i.e., a partition within a partition — for greater granularity and performance.

Example Use Case:

Partition 1: by

Claim_DateSub-partition: by

State

This improves query efficiency when filters match both levels, enabling multi-level partition elimination.

In Teradata, when using Multi-Level Partitioned Primary Index (MLPPI), you can define the partitions using either:

RANGE_N— for range-based partitioning (like dates, numbers)CASE_N— for custom logic or discrete values

🧩 Use RANGE_N When:

You are partitioning by a range of values (e.g., dates or numeric ranges).

Best for time-series data, like logs or transaction tables.

🧩 Use CASE_N When:

You want to partition using categorical values, custom conditions, or non-uniform ranges.

Good when data doesn't fit into neat, equal ranges.

=====================================================================

Column Partitioning stores columns separately instead of storing full rows together.

👉 Traditional Teradata = Row-based storage

👉 Column Partitioning = Column-based storage

👉 Improves performance for analytical queries

It can be applied to:

Tables (especially NoPI tables)

Join Indexes

🔹 Normal Table (Row Storage)

If table has 20 columns:

SELECT col1, col2 FROM big_table;

👉 Teradata reads all 20 columns from disk

👉 Higher I/O

🔹 Column Partitioning

👉 Data stored column-wise

👉 Only required columns read

SELECT col1, col2 FROM big_table;

👉 Only col1 and col2 scanned

👉 Reduced I/O

👉 Faster query

🔹 Limitations

❌ Slightly slower inserts

❌ Not ideal for transactional workloads

❌ More complex storage structure

=========================================================================

Join Index (JI) in Teradata

A Join Index is a system-maintained physical structure that stores pre-joined or pre-aggregated data

of join of two or more tables to speed up query performance.

👉 Reduces repeated join computation

👉 Stored physically like a table

👉 Can be row-based or columnar (Columnar Join Index = CJI)

Eliminate base table joins

Reduce data redistribution

Avoid aggregate processing

Improve query response time

🔷 1. What is a Single-Table Join Index (STJI)?

A Single-Table Join Index is a special type of join index that:

Is defined on only one table.

Uses a different column as its Primary Index (PI) than the base table’s PI.

Helps optimize joins between foreign keys and primary keys—especially in star schema or snowflake models.

Avoids row redistribution and base table access during queries.

🧩 Why Use STJI?

Teradata stores rows based on the PI. If you frequently join on a non-PI column (e.g., a foreign key), Teradata may redistribute data to perform the join.

✅ STJI prevents redistribution by pre-sorting and rehashing rows using the foreign key (or any chosen column).

What This Does:

Creates a copy of the

orderstable, hashed oncustomer_idinstead oforder_id.When a query joins

orders.customer_idtocustomers.customer_id, Teradata can use this index.No data redistribution needed because both tables are now hashed on the same column.

🔹 2.Multi-Table Join Index (MTJI) in Teradata

A Multi-Table Join Index (MTJI) is a Join Index that combines more than two base tables into a precomputed, stored join.

👉 Speeds up queries that join multiple tables frequently

👉 Can be row-based or columnar

👉 Stored physically like a table

🔹 Advantages

✅ Speeds up multi-table queries

✅ Reduces CPU & AMP load

✅ Precomputed join simplifies SQL

✅ Good for data warehouse reporting

🔹 Disadvantages

❌ Uses extra disk space

❌ Slower inserts/updates/deletes on base tables (index maintenance)

❌ Needs careful design to select only necessary columns

🔹 3. Aggregate Join Index (AJI) in Teradata

An Aggregate Join Index (AJI) is a precomputed, stored table that combines aggregation and joins.

👉 Optimized for analytical queries

👉 Stores aggregated values (SUM, COUNT, AVG, etc.)

👉 Can be row-based or columnar

👉 Reduces query processing time on large fact tables

🔹 Advantages

✅ Improves query performance for aggregation queries

✅ Reduces CPU and I/O on large tables

✅ Simplifies complex queries for users

✅ Can be combined with columnar storage for further optimization

🔹 Disadvantages

❌ Extra storage required

❌ Insert/Update/Delete slower due to maintenance

❌ Needs careful selection of aggregation columns

🔹 4. Columnar Join Index (CJI) in Teradata

A Columnar Join Index (CJI) is a precomputed, columnar storage index based on a join of one or more tables to improve query performance for analytical workloads.

👉 Combines benefits of Join Index (prejoin data) and Columnar storage (column-based access)

👉 Speeds up queries with aggregations, filters, and joins

🔹 How Columnar Join Index Works

1️⃣ Base tables: sales and customer

2️⃣ Query often executed:

SELECT c.region, SUM(s.amount)

FROM sales s

JOIN customer c

ON s.cust_id = c.cust_id

GROUP BY c.region;

3️⃣ CJI created:

CREATE JOIN INDEX cji_sales_customer AS

SELECT s.amount, c.region

FROM sales s

JOIN customer c

ON s.cust_id = c.cust_id

PRIMARY INDEX (cust_id)

PARTITION BY COLUMN;

-

Stores only needed columns (

amountandregion) -

Precomputed join stored in columnar format

-

Queries hitting CJI skip join computation

🔍 Full Table Scan (FTS)

A Full Table Scan happens when Teradata (or any database engine) reads every row in a table to find rows that match a query’s condition—because no index or partitioning strategy can narrow it down.

⚠️ This is often the least efficient way to access data, especially for large tables.

❌ What happens here?

The Primary Index (PI) is on

emp_id, but the query filters ondept_id, which is not indexed.Result: Teradata must read every row to find those where

dept_id = 10→ Full Table Scan.

=========================================================================

✅ Best Practices

Choose PKs based on business rules and uniqueness.

Choose PIs based on query patterns, join usage, and data distribution.

Don't always assume PK = PI. Analyze based on performance needs.

=====================================================================

| Feature | Description |

|---|---|

| Works at | Data block level |

| Compresses | Entire block of rows |

| Best For | Large tables |

| Enabled | Automatically in newer versions |

=====================================================================

🔹 Space in Teradata

In Teradata, Space means the disk storage allocated to a database or user to store tables, indexes, temporary data, etc.

👉 Space is allocated at Database/User level

👉 Managed automatically across AMPs

👉 Measured in bytes (KB, MB, GB)

🔍 1. Perm Space

Permanent Space (Perm Space) is the disk space allocated to store permanent database objects like tables, indexes, views, macros, etc.

👉 Stores actual business data

👉 Not automatically cleared

👉 Allocated to Database/User

👉 Distributed across AMPs

Definition: Maximum allowed space a user/database can occupy permanently.

Not pre-allocated, only consumed as needed.

Used for:

Table data (rows)

Stored procedures

User-Defined Functions (UDFs)

Required for creating tables.

| Feature | Description |

|---|---|

| Stores | Tables, indexes, views |

| Allocated to | Database/User |

| Remains until | Object is dropped |

| Most important | Yes |

🔍 2. Spool Space

Spool Space is temporary disk space used by Teradata to store intermediate results while executing a query.

👉 Used during SELECT, JOIN, GROUP BY, ORDER BY

👉 Automatically cleared after query finishes

👉 If exceeded → query fails

Definition: Temporary space used during SQL execution.

Used for:

Sorting

Joining

Aggregation

Storing query results

Reclaimed automatically when the query ends.

Inherits limits from parent user/database unless explicitly set.

| Feature | Description |

|---|---|

| Used for | Intermediate query results |

| Automatically freed | After query ends |

| Very important | Yes (query may fail if exceeded) |

🔍 3. Temp Space

Temp Space (Temporary Space) is the disk space used to store Global Temporary Tables (GTTs) during a user session.

👉 Used only for temporary tables

👉 Data is session-specific

👉 Automatically cleared after session ends

Tables created in Temp Space survive restarts (not the data).

Treated like Perm Space, but temporarily allocated for session data.

| Feature | Description |

|---|---|

| Used for | Global Temporary Tables |

| Released | At session end |

| Stored in | Temp Space allocation |

4️⃣ Journal Space

Journal Space is the disk space used to store before and after images of rows for data recovery purposes.

👉 Helps in recovering data if transaction fails

👉 Used for rollback and permanent journal

| Feature | Description |

|---|---|

| Used for | Recovery |

| Stores | Before/After image of rows |

| Optional | Can be disabled |

=====================================================================

📌 COLLECT STATISTICS

COLLECT STATISTICS (a.k.a. COLLECT STATS) gathers data demographics (like row count, value distribution, uniqueness) on columns, indexes, or partitions.

Collect Statistics is a process in Teradata that gathers metadata about table columns or indexes to help the optimizer choose the best execution plan.

✅ These stats are used by the Teradata Optimizer to:

Choose optimal query plans

Decide whether to use an index or full table scan

Improve join strategies, aggregations, and access paths

💡 Why It Matters

Without stats, Teradata assumes uniform distribution and guesses row counts, which can result in:

Wrong join order

Full table scans

Skewed AMP workload

Longer response times

🔹 How It Helps Optimizer

-

Determines row count and value distribution

-

Helps decide join strategy:

-

Merge Join

-

Hash Join

-

Product Join

-

-

Helps in AMP assignment

-

Reduces spool space usage

📊 What Does It Collect?

Distinct values count

Null count

Min/Max values

Frequency histogram

Row count

Skew factor

These help the optimizer estimate:

Selectivity (how many rows a filter returns)

Join cardinality

Join cost and order

Whether to use an index, join index, or full table scan

🔹 SAMPLE in COLLECT STATISTICS (Teradata)

In Teradata Vantage, the SAMPLE option in COLLECT STATISTICS allows the optimizer to collect statistics using only a percentage of table rows instead of scanning the entire table.

👉 This reduces resource usage and improves performance when collecting stats on large tables.

✅ Why Use SAMPLE?

Full stats collection = Full table scan (expensive on big tables)

Using SAMPLE:

-

Faster

-

Less CPU usage

-

Less I/O

-

Less spool usage

Best for:

-

Very large tables

-

Frequently refreshed data

-

Columns with good distribution

🔹 Types of SAMPLE

1️⃣ SYSTEM SAMPLE (Recommended)

COLLECT STATISTICS

USING SYSTEM SAMPLE

COLUMN (emp_id)

ON Employee;

✔ System decides optimal sample size

✔ Automatically balances accuracy vs performance

2️⃣ SAMPLE n PERCENT

COLLECT STATISTICS

USING SAMPLE 10 PERCENT

COLUMN (emp_id)

ON Employee;

✔ You control percentage

✔ n range: 2 – 100

✔ SAMPLE 100 PERCENT = Full statistics

🔹 Full Statistics

COLLECT STATISTICS COLUMN(emp_id) ON Employee;

-

Scans 100% rows

-

More accurate

-

Slower

🔹 Sample Statistics (10%)

COLLECT STATISTICS

USING SAMPLE 10 PERCENT

COLUMN(emp_id)

ON Employee;

-

Scans only 10% rows

-

Faster

-

Slightly less accurate

🔹 When to Use SAMPLE?

| Scenario | Use SAMPLE? |

|---|---|

| Huge fact table | ✅ Yes |

| Small table | ❌ No (collect full) |

| Highly skewed column | ❌ Better full stats |

| Nearly unique column | ✅ Sample works well |

| Partitioned table | ⚠ Depends |

🔹 Collect Statistics on Partition Column (Teradata)

In Teradata Vantage, collecting statistics on a Partition Primary Index (PPI) column is very important for performance.

If you don’t collect stats on partition columns, the optimizer may:

-

Scan all partitions ❌

-

Misestimate row counts ❌

-

Generate large spool ❌

1️⃣ Basic Syntax

COLLECT STATISTICS

COLUMN (PARTITION)

ON sales_table;

✔ COLUMN (PARTITION) is special keyword

✔ Used only for partitioned tables

2️⃣ Collect Stats on Partition + Column Together

COLLECT STATISTICS

COLUMN (PARTITION, order_date)

ON sales_table;

✔ Helps optimizer understand:

-

Partition distribution

-

Column distribution inside partition

3️⃣ Collect Stats on Partitioned Column Normally

If table is partitioned by:

PARTITION BY RANGE_N(order_date BETWEEN DATE '2024-01-01'

AND DATE '2026-12-31'

EACH INTERVAL '1' MONTH);

You should collect:

COLLECT STATISTICS COLUMN(order_date) ON sales_table;

AND

COLLECT STATISTICS COLUMN(PARTITION) ON sales_table;

Best practice → Collect both.

🔥 Example

CREATE TABLE sales_table

(

order_id INTEGER,

order_date DATE,

amount DECIMAL(10,2)

)

PRIMARY INDEX(order_id)

PARTITION BY RANGE_N(order_date

BETWEEN DATE '2024-01-01'

AND DATE '2025-12-31'

EACH INTERVAL '1' MONTH);

Collect stats:

COLLECT STATISTICS COLUMN(PARTITION) ON sales_table;

COLLECT STATISTICS COLUMN(order_date) ON sales_table;

🔹 Why Both Needed?

| Statistic | Purpose |

|---|---|

| PARTITION | Number of rows per partition |

| order_date | Value distribution inside partitions |

Together → Better partition pruning + better join plan.

🔹 USING THRESHOLD in COLLECT STATISTICS (Teradata)

In Teradata Vantage, THRESHOLD is used in COLLECT STATISTICS to avoid unnecessary recollection of stats.

👉 It improves performance by recollecting statistics only when needed.

✅ Why THRESHOLD is Needed?

Recollecting stats every time:

-

Wastes CPU

-

Consumes I/O

-

Uses spool

-

Slows batch jobs

THRESHOLD helps skip recollection if:

-

Data change is small

-

Stats are still recent

🔹 Types of THRESHOLD

1️⃣ THRESHOLD n PERCENT (Change-Based)

Recollect only if data changed by more than n%.

COLLECT STATISTICS

COLUMN(emp_id)

ON employee

THRESHOLD 10 PERCENT;

👉 If data changed < 10% → stats NOT recollected

👉 If data changed ≥ 10% → stats recollected

2️⃣ THRESHOLD n DAYS (Time-Based)

Recollect only if stats older than n days.

COLLECT STATISTICS

COLUMN(emp_id)

ON employee

THRESHOLD 7 DAYS;

👉 If stats collected 3 days ago → skipped

👉 If stats collected 10 days ago → recollected

3️⃣ Combined THRESHOLD (Both Conditions)

COLLECT STATISTICS

COLUMN(emp_id)

ON employee

THRESHOLD 10 PERCENT

AND THRESHOLD 5 DAYS;

Recollection happens only if:

-

Change ≥ 10%

AND -

Older than 5 days

🔹 Remove Threshold

NO THRESHOLD

COLLECT STATISTICS

COLUMN(emp_id)

ON employee

NO THRESHOLD;

Remove Only Days Threshold

NO THRESHOLD DAYS;

Remove Only Percent Threshold

NO THRESHOLD PERCENT;

🔥 First Time Collection

If stats are collected for the first time:

-

THRESHOLD is ignored

-

Stats are collected

-

Threshold is stored for future recollections

🔹 WITH DATA Table in Teradata

In Teradata Vantage, WITH DATA is used when creating a table from another table or query, and you want to copy both:

-

✅ Structure (columns, datatypes)

-

✅ Data

🔹 Example 1 — Copy Structure + Data

Source Table

CREATE TABLE employee

(

emp_id INTEGER,

emp_name VARCHAR(50),

salary DECIMAL(10,2)

);

Insert data:

INSERT INTO employee VALUES (1,'John',50000);

INSERT INTO employee VALUES (2,'Alice',60000);

Create Backup Table

CREATE TABLE employee_bkp AS employee

WITH DATA;

🔹 Example 2 — Using SELECT

CREATE TABLE high_salary_emp AS

(

SELECT * FROM employee

WHERE salary > 55000

) WITH DATA;

CREATE TABLE empty_emp AS employee

WITH NO DATA;

👉 Only structure copied

👉 No rows copied

In Teradata Vantage, WITH DATA AND STATS is used to copy:

-

✅ Table structure

-

✅ Data

-

✅ Collected statistics

This is very useful in performance tuning scenarios.

✅ Syntax

CREATE TABLE new_table AS old_table

WITH DATA AND STATS;

OR

CREATE TABLE new_table AS

(

SELECT * FROM old_table

)

WITH DATA AND STATS;

🔹 What Exactly Gets Copied?

| Component | Copied? |

|---|---|

| Columns | ✅ Yes |

| Data | ✅ Yes |

| Collected Statistics | ✅ Yes |

| Primary Index | ✅ Yes |

| Constraints | ❌ No |

| Triggers | ❌ No |

| Default values | ❌ No |

🔥 Why WITH DATA AND STATS is Important?

In Teradata, the optimizer depends heavily on statistics.

If you use only:

WITH DATA

The new table will have no statistics, and query performance may degrade.

But:

WITH DATA AND STATS

👉 Preserves optimizer behavior

👉 Avoids recollecting statistics

👉 Saves time in large tables

=====================================================================

MERGE USING WHEN in Teradata

MERGE INTO is used to:

👉 UPDATE existing rows

👉 INSERT new rows

👉 In a single statement

It is commonly called an UPSERT operation.

MERGE is used to synchronize two tables by performing:

- Updating rows in the target table that match rows in the source table.

- Inserting rows into the target table that exist in the source but not in the target

- Deleting rows from the target table that do not exist in the source.

🔹 How It Works

| Condition | Action |

|---|---|

| Row exists in target | UPDATE |

| Row does not exist | INSERT |

🔥 Example

🎯 Target Table: Employee

| id | name | salary |

|---|---|---|

| 1 | John | 5000 |

| 2 | Mike | 6000 |

🎯 Source Table: Employee_Stage

| id | name | salary |

|---|---|---|

| 2 | Mike | 6500 |

| 3 | Alice | 7000 |

MERGE Query

MERGE INTO employee tgt

USING employee_stage src

ON tgt.id = src.id

WHEN MATCHED THEN

UPDATE SET salary = src.salary

WHEN NOT MATCHED THEN

INSERT (id, name, salary)

VALUES (src.id, src.name, src.salary);

✅ Result After MERGE

| id | name | salary |

|---|---|---|

| 1 | John | 5000 |

| 2 | Mike | 6500 |

| 3 | Alice | 7000 |

🔹 Multiple WHEN Conditions

Teradata allows conditions inside WHEN MATCHED.

WHEN MATCHED AND tgt.salary <> src.salary THEN

UPDATE SET salary = src.salary

👉 Update only if salary changed.

🔹 DELETE with MERGE

WHEN MATCHED AND src.status = 'D' THEN

DELETE

Used in SCD Type 1 logic or soft delete handling.

🔥 Important Rules in Teradata

1️⃣ Target table must be a base table (not view)

2️⃣ Cannot update Primary Index column

3️⃣ Only one source row should match a target row (else error)

4️⃣ Works best when join is on PI

🔥 MERGE vs INSERT + UPDATE

| Feature | MERGE | Separate INSERT + UPDATE |

|---|---|---|

| Single pass | ✅ Yes | ❌ No |

| Faster | ✅ Yes | ❌ Slower |

| Cleaner logic | ✅ Yes | ❌ More code |

🔥 MERGE on Non-Equality Condition in Teradata

In Teradata Vantage, the MERGE statement is primarily designed for equality joins.

❗ Important Rule

👉 In Teradata, the ON condition of MERGE must use equality (=) conditions.

You cannot directly use:

ON tgt.date BETWEEN src.start_date AND src.end_date ❌

ON tgt.amount > src.min_amt ❌

ON tgt.id <> src.id ❌

These will fail or are not supported as valid MERGE match conditions.

💡 Why?

MERGE works like a row-by-row matched update.

Teradata requires:

-

Deterministic match

-

At most one target row per source row

-

AMP-local matching using hash distribution

Non-equality conditions break that logic.

🔥 What To Do Instead?

You have 3 professional approaches 👇

✅ Option 1: Use Derived Table (Recommended)

Pre-filter the source using a join, then merge using equality.

Example Scenario

Update salary based on date range condition.

❌ Not allowed: